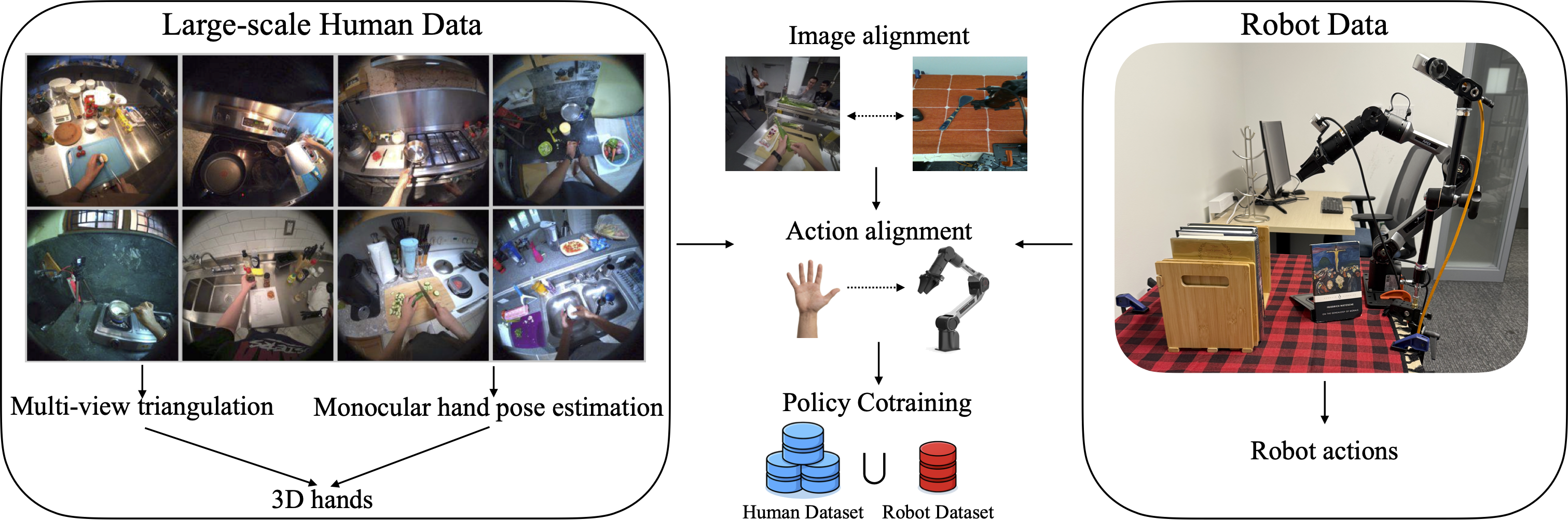

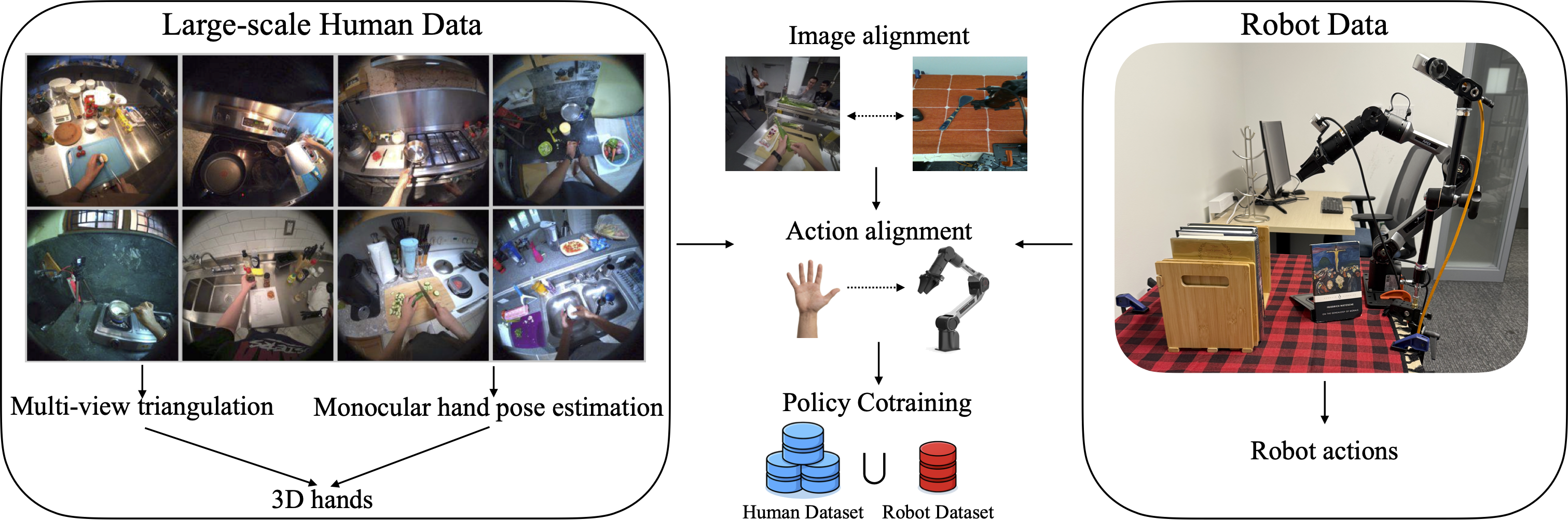

System Diagram

We build TriHands, a dataset of everyday cooking videos with accurate 3D hands produced by a multi-view triangulation pipeline.

Below we visualize 2D projections of our triangulated hand keypoints from multiple synchronized camera views.

Drag the slider to scrub through time. Exo views: drag to pan, scroll to zoom, double-click to reset.

Side-by-side comparison of zero-shot policy rollouts on unseen test environments.

Human Cotraining (ours) consistently outperforms the Robot Only baseline.

Existing human video datasets used for cotraining robot manipulation policies largely consist of curated demonstrations collected by roboticists. The human motions in these videos are carefully orchestrated to look like robot motions and the 3D hand poses acquired are highly accurate due to usage of specialized capture devices. A more plentiful and diverse source of data is everyday videos on the Internet of humans accomplishing daily tasks. To study cotraining in this setting, we curate a large-scale dataset of 532 videos of natural human activity (cooking) with over 28 hours of high-quality hand labels. We find that hand quality affects transfer performance, but even with high-quality hands, the inherent motion gap can hinder transfer if the policy network cannot properly specialize to each embodiment. We propose a cotraining recipe based on a token-level fusion architecture, embodiment-specific action encoders and decoders, and a loss that upweights robot data. Our recipe allows for consistently improved scaling when cotraining on human data, with a mean success rate improvement of +29.7% in the low-robot-data regime across six manipulation tasks.

Training Environments (10 scenes)

Test Environments (unseen objects & backgrounds)

We thank the EgoExo4D team for providing the original dataset and calibration data. This work was supported in part by [funding sources to be added].

@inproceedings{anonymous2026cotraining,

title = {What Matters When Cotraining Robot Manipulation Policies on Everyday Human Videos?},

author = {Anonymous},

booktitle = {TBD},

year = {2026}

}